Lessons Learned from the Minab School Strike

AI Decision-Support Systems in Targeting and the Duty to Verify

“We will unleash experimentation, eliminate bureaucratic barriers, focus our investments and demonstrate the execution approach needed to ensure we lead in military AI, […] we will become an ‘AI-first’ warfighting force across all domains.” – Pete Hegseth, U.S. Secretary of War

This ambition appeared to materialise when the U.S., together with Israel, struck Iran on 28 February 2026. U.S. officials confirmed that artificial intelligence (AI) was used in the operation to process intelligence and assist analysts in identifying potential targets, enabling target selection at unprecedented speed. Within the first four days alone, nearly 2,000 targets were reportedly struck.

The risks posed by such systems were starkly evident from the outset of the operation, when a missile struck the Shajareh Tayyebeh girls’ school in Minab, killing at least 165 schoolgirls and injuring more. Internal U.S. investigations, reported by the New York Times (NYT), suggest the strike resulted from the school being incorrectly identified as a military base during the targeting process due to outdated data.

The internal report denies that the misidentification was caused by the deployed technology, instead claiming it was a “common – but sometimes devastating – human error in wartime.”

However, separating ‘human error’ from the AI systems used in targeting may be an oversight, as such systems shape how intelligence is processed and decisions are made, raising both factual and legal questions.

Under international humanitarian law, Art. 57 of Additional Protocol I (AP I) requires parties to take all feasible precautions to verify targets. The Minab strike raises the question of how reliance on an AI-based Decision-Support System (AI-DSS) affects compliance with this obligation. This article argues that if AI-DSSs are integrated into the targeting process, then the duty to verify must also include precautionary measures that ensure the AI system’s accuracy and reliability.

The Facts: Minab School Strike

Journalistic investigations after the lethal strike focused on how the school came to be selected as a target (For example, see here, here and here). The school was located next to a complex of buildings associated with the Islamic Revolutionary Guard Corps (IRGC). However, investigations confirmed that the school was physically separated from the military compound. By the time of the strike, the site had long been converted into a clearly identifiable civilian school.

As briefly mentioned above, it was reported that the targeting coordinates used by the U.S. Central Command were derived from outdated intelligence data provided by the Defense Intelligence Agency (DIA). This data incorrectly labelled the school as a military objective. The data appears not to have been sufficiently re-verified with updated imagery or other intelligence sources before the strike was carried out, which was attributed to the fast-moving early phases of the operation.

Investigators are also examining the role of the National Geospatial-Intelligence Agency (NGA), which provides satellite imagery used to verify potential targets. According to the NYT, when the targeting information provided by the DIA is outdated, intelligence officers are expected to consult data from the NGA in order to reassess and verify the target’s nature prior to carrying out a strike.

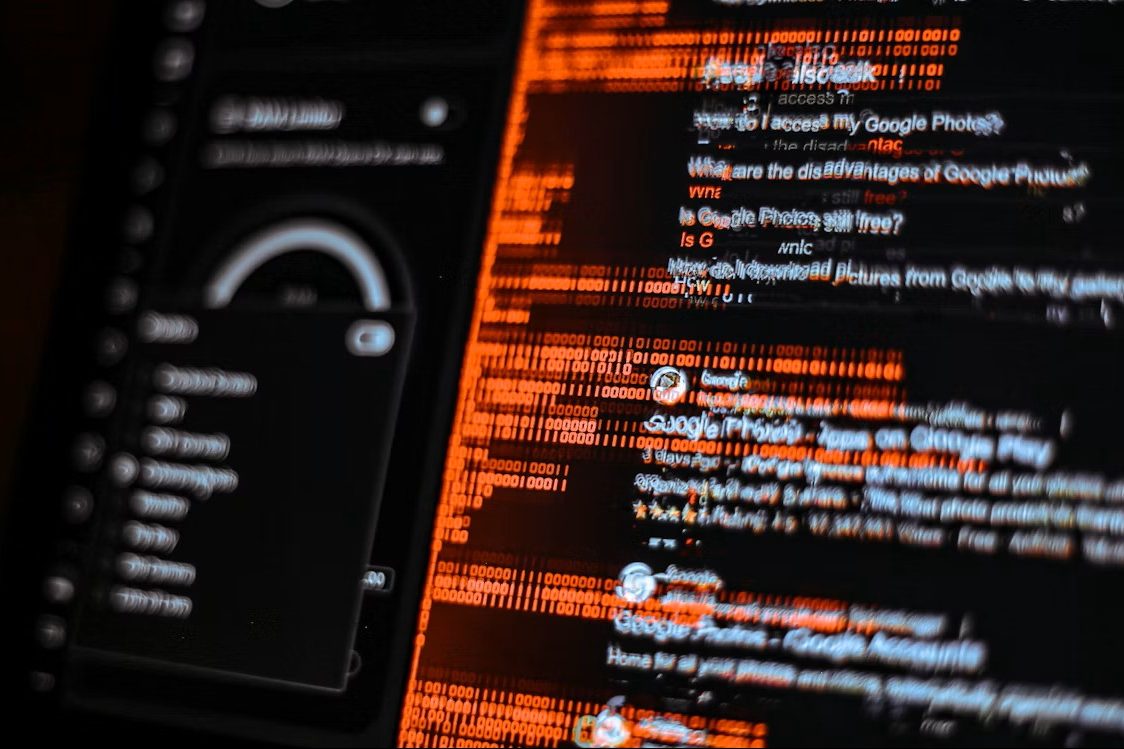

The NGA uses the Maven Smart System (MSS), an AI-enabled intelligence platform that processes large volumes of satellite and surveillance data to highlight areas of interest to analysts. Within this system, the large language model Claude, developed by Anthropic, has reportedly been used to analyse incoming intelligence and support targeting processes. It was even reported that the system has been used to generate target recommendations.

Before the Strike: The Obligation to Verify Targets

In armed conflicts, parties are required to take all feasible measures to verify that their envisioned targets are in fact military objects. This principle is not only articulated in Art. 57 AP I, but it is also considered customary international law. This obligation reflects the broader principle set out in Art. 57 para. 1 AP I, which states that constant care must be taken during military operations to protect the civilian population and civilian property.

There is no set of general measures that must be taken before each strike in order to comply with Art. 57 para. 2 (a) (i) AP I, since each strike is individual and requires distinct measures of verification. In cases of attacks conducted at a distance, commanders typically rely on information provided by intelligence services and reconnaissance assets,as reported in the case of the Minab school attack. This, however, does not absolve the commander’s responsibility to assess the reliability of that information (para. 2195).

International jurisprudence reinforces this expectation. The ICTY’s Final Report on the NATO bombing campaign emphasised that commanders must establish an effective intelligence system capable of collecting and evaluating information about potential targets, and must ensure that available technical means are used to properly identify them (para. 29).

The requirement to do ‘everything feasible’ refers to what is practicable or possible at the time and in the circumstances of the attack (para. 2198). This depends on factors such as the available information, verification time and the technical means available. However, the obligation’s underlying purpose remains constant: to minimise the risk of civilians or civilian objects being mistakenly targeted.

Verification in the Age of AI

The obligation to verify remains the same regardless of the technological tools used in the targeting process. This requirement can be understood as forming part of the verification obligation under Art. 57 para. 2 (a)(i) AP I. Alternatively, it may be considered part of the obligation to take all feasible precautions when choosing the means and methods of an attack, as set out in Art. 57 para. 2 (a)(ii) AP I. The question of whether AI-DSSs constitute a means or method of warfare has been discussed in the context of Art. 36 AP I, which governs the legal review of new weapons. By specifically referring to means and methods of an attack rather than warfare in general, Art. 57 para. 2 (a)(ii) AP I indicates (para. 2188) that its obligations apply to the verification required for a specific targeting decision rather than the broader regulation of warfare technologies.

At the same time, the obligation to verify must be understood as applying to the entire targeting process, including the stages at which AI-DSS are involved. As Wolf notes, errors can arise at multiple stages of the targeting process, particularly during the classification of objects. If MSS was in fact deployed in the Minab school strike, the verification measures fell short at the classification stage of the targeting process. Due to false data, the AI-DSS could have identified the school building as a military objective, resulting in a false positive identification. However, as Milanovic argues, journalists were able to identify the school building as civilian using only open-access sources. This raises serious doubts as to whether military operators were unable to do the same. Accordingly, such verification measures appear feasible.

This misidentification of the school was described as a ‘human error’. While the human operator may have taken the final decision to launch the strike, this characterisation risks overlooking the broader targeting process in which AI-DSSs may have been involved. Target verification rarely involves a single decision, but is instead the result of a chain of intelligence collection, analysis and classification. Even if the ultimate error occurred at the final stage of decision-making, earlier stages of this process may have been influenced by AI systems. Of course, misidentification of targets can occur in purely human-driven processes as well. However, the deployment of AI-DSSs may amplify certain risks within the verification process.

AI-Specific Risks for the Duty to Verify

The integration of AI-DSSs introduces risks that affect how the obligation to verify is implemented in practice. These risks do not arise solely from the technology itself, but from the manner in which such systems influence the processing, interpretation and reliance on intelligence during the targeting cycle.

The first concern relates to the use of outdated or unreliable data. As the Minab school strike shows, the misidentification of the school appears to have come from outdated intelligence that wrongly labelled the building as a military target. While inaccurate intelligence is not a new problem in warfare, AI-DSSs may exacerbate this risk. Systems designed to process and structure large volumes of information can rapidly incorporate outdated or incorrect data into the analytical process. This can potentially propagate errors through the targeting chain if the underlying information is not verified independently.

The second risk concerns automation bias – i.e. “the inclination of humans to trust machines more than themselves or other experts” (Kücking et al., p. 298). AI-DSSs often highlight points of interest, classify objects and sometimes generate target recommendations. Even when such outputs seem purely advisory, human operators may attribute a higher degree of reliability to the system’s analysis. This is often due to the way an AI-DSS filters, selects, and presents the data it ‘deems’ relevant. This can result in users relying on system outputs without adequately scrutinising the underlying intelligence, which weakens the critical assessment required by Art. 57 para. 2 AP I.

Finally, the opacity of some AI systems can make the verification process more difficult. In the Minab case, for example, the role of outdated intelligence data only became apparent post hoc. While retrospective identification of errors may improve future practices, limited transparency in AI-assisted analysis can make it difficult for users to understand how a system arrives at a particular classification during the targeting process itself. If the internal processes through which AI systems acquire and analyse intelligence are difficult to examine, this raises the broader question of whether relying on such systems can satisfy the obligation to take all feasible precautions to verify the nature of a target.

These risks are not just technical or factual, they also affect how the obligation to verify must be implemented in order to fulfil their overall purpose. Where targeting relies on AI-DSSs, taking “all feasible precautions” requires not only an assessment of the reliability of the underlying intelligence, but also of the technological systems through which that intelligence is processed. Commanders cannot satisfy the verification obligation by simply having a human decision-maker present if the information shaping that decision is generated or filtered by opaque or insufficiently validated AI systems.

What Minab Teaches About Verification in AI-Assisted Warfare

The Minab school strike illustrates how failures in the verification process can have devastating consequences for civilians. Although the misidentification of the school has been characterised as human error, this overlooks the fact that AI-supported systems are increasingly shaping the targeting process.

While the legal threshold remains the same, the way we are adhering to it is not. As AI-DSS become integrated into targeting, the duty to verify cannot be limited to reviewing the final targeting decision alone. Rather, it must also include critical scrutiny of the technological systems that shape the decision-making process.

Therefore, the integration of AI-DSS expands the scope of the verification obligation: commanders must not only verify the target itself, but also critically assess the reliability of AI-assisted intelligence systems used in the targeting process. If warfare is becoming “AI-first”, the verification process must become AI-aware.

Natali Gbele is a PhD-candidate and research assistant at the Chair for International Law and Public Law (Professor Dr. Christian Walter) at LMU Munich. Her focus field is International Humanitarian Law.